SOMA is the game that keeps on giving, at least when it comes to questions about humanity and the nature of reality. Prior to this article, we touched upon the idea of consciousness and at what point You 2.0 stops being You and starts being Their Own Person. Within that is the question of whether a digital copy of our analogue brains can really count as being “human,” or whether it is, to quote Mass Effect 3, a pale imitation of the real thing.

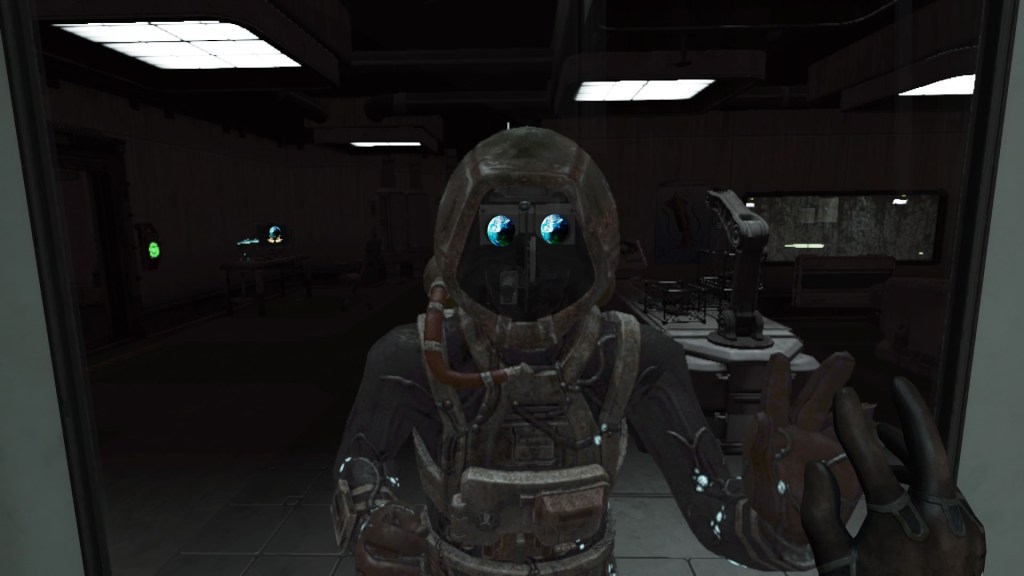

This idea of a digital version of You living on in a digital version of Your World, while the biological you and the physical world that we know fades away, is prevalent throughout SOMA. We meet numerous robots who seem to believe that they are still human, despite their very-industrial robot bodies (and their very-disposable nature that Simon – and the player – explore throughout the game).

The game asks the player questions about where the line between consciousness and programming is, but never gives its own “truth” in regards to an answer. Catherine, Simon’s intrepid digital guide who was once human herself, talks about the “coin toss,” which is a completely fabricated idea that by copying one’s consciousness into another body, one essentially body hops into a new body. Unfortunately, this isn’t correct, and she states this herself. What is actually happening is that the consciousness splits off. Think of it like a family tree, with branches splitting off with each “copy” that is made. No coin toss occurs; there are two (or more) separate beings with a common ancestor.

Except in this case, the common ancestor is your personality, your memories, and your past. And the branches are all clones of what came before, that were left to their own devices to live their own lives based on Who You Are.

It makes your brain hurt after a while.

One aspect of this new digital existence that is explored is the WAU system. The questions WAU’s existence raise are every bit as fascinating as the discussion surrounding consciousness and the line between You and Copy You, with implications that are just as far-reaching.

Get Your WAU On

In SOMA, the WAU is an artificial intelligence working alongside the humans of the underwater PATHOS-II station to preserve humanity after a cataclysmic, literal earth-shattering event. “WAU” stands for the Warden Unit, and like its name implies, the WAU is responsible for caretaking the wellbeing of humanity – or, rather, the wellbeing of the consciousnesses of humans.

In theory, the WAU is something called an artificial general intelligence, which is, basically, a system that is (hypothetically) able to learn and perform any task a human can. This is the type of AI systems most often found in science fiction stories, and are perfected in the fantastic Detroit: Become Human.

Unfortunately, the WAU doesn’t have a clear understanding of what humanity is.

Par for the course, since we, fully-fledged humans, also often have trouble defining Humanity without resorting to shared experiences that cannot easily be reduced to lines of code.

So at this point, we have an AI, touted as being able to learn anything a human can, trying to apply “save humanity” to a situation for which even the humans can’t figure out what “saving humanity” looks like.

What could go wrong?

Oh.

Apparently a lot.

Parallel to the WAU’s programming is our friend Catherine’s ARK project, which sought to digitize our analogue brains, for the purpose of digitally preserving human consciousness (or a technological facsimile of it). So now we have two huge players in the arena: one human trying to digitally preserve a copy of human consciousness, and one robot trying to preserve the nebulous idea of “humanity.” Although the WAU is one of the main antagonists in the game, and Catherine is portrayed as the dependable (sort of) side-kick, they are on similar paths.

Catherine sought to preserve peoples’ consciousness, in order to load them into the ARK and shoot it into space, where the lines of code would happily exist for a thousand years, or until the power supplies malfunctioned. This caused the unintended consequence of humans being psychologically unable to fathom there being Two Of Them, and, to remedy this, committing suicide. Or, in order to preserve “continuity of consciousness” (which, as we discussed last time, is a fallacy), they would commit suicide in order to experience “winning the coin toss” and body jumping into their new robot home.

So… good intentions, but kind of failing even as she succeeded.

The WAU on the other hand, tasked with preserving humanity, began taking these consciousness copies and putting them into robot bodies. The human consciousnesses in robot bodies coped with not being human by creating a block around their reality, creating an imaginary world that they would live in forever, or until their battery packs wore out. Or, if the WAU put the consciousnesses into robot/human hybrids, they promptly went insane, unable to handle their reality. OR it would take humans still in their biological bodies and hook them up to permanent life support, so their bodies would stay alive, even – in the case of the last human on earth – if they didn’t believe they were truly living anymore, merely existing.

So… good intentions, but kind of failing even as it was successful.

Which circles us back around to what is consciousness, what is humanity, and what did the WAU ultimately fail to capture that it resulted in it actually destroying the world further, failing at its job even as it technically flourished?

But first, we need to take a step back and examine Simon’s own morality – his own humanity – and how it evolved over the course of the game.

Morality and AI

Our friend Simon progresses through a number of stages in his own journey toward understanding what constitutes life and a life worth living and preserving. We can trace this through how he approaches shutting down robots as the game progresses. Through his actions, we can see his evolving thinking about “life,” from the very first robot he turns off to his interaction with the last human alive, and perhaps evolve our own thinking along the way.

The very first robot Simon unplugs is a poor boxy-looking thing that sits on the floor and shivering, plugged into a computer console. The story forces the issue, as the only way to progress the plot is to unplug it from its power supply, at which point the robot, distraught, asks you why you unplugged it, as it was happy.

When I played the game, I was very concerned that SOMA was going to turn into “that kind” of game, that forces you to make bad choices and then scolds you for it. But what happens is so much worse.

At this point, even though the robot had a clear preference for being plugged in, Simon’s own journey is just beginning. He is an innocent in this world, seeing a clear distinction between “human” and “machine,” so while perhaps the robot’s words were sad, it was just a line of code. A program. A video game to be turned on and off.

We progress through the game, and eventually come across a human being kept alive by the WAU. Unfortunately, Simon must also unplug this person in order to advance the plot, but this time, both player and Simon seem to react differently. It’s a human, but a human kept physically alive by a machine, and is perforce attached to the machine and unable to move. Unnerving, but what kind of life is it for a person to be hooked into a machine like that? But he pushes on, dismissing this unplugging as necessary – or even a mercy, even though the human might have disagreed with him. At this point, perhaps, the first shred of thought as to what he is really doing might come into his mind.

It smacks him in the face after he is copied into the diving suit (Simon 3 for those of you who were here last time we talked about this game). He’s then faced with a choice of leaving his “old self” (Simon 2) behind, unconscious, until his batteries run out, or actively unplugging him, effectively killing him. Either way, Simon 2’s fate is sealed and, either way, Simon 3 is the cause of his death.

At this point, that is how Simon 3 interprets what he’s doing: he is killing a person, even though the object in the chair is a robot of digital code for brains, and not a flesh-and-blood human. It begins to weigh on him, this knowledge, and he slowly begins to question his own humanity, and the humanity of the robot bodies with human consciousnesses (which went mad. We’ll talk about them below), and the humanity of the coded copies of consciousnesses that await the ARK to be launched.

Under this weight, Simon meets the last human being on earth, attached to the WAU in order to preserve her life. She asks Simon to kill her, saying that her life – preserved in this way by the WAU – is not worth living. So different from the first human Simon met attached to the WAU, but then again, Simon is different now, too. He has progressed from an innocent, unplugging robots/hybrids without thought to what he’s doing, to acting while knowing what he is truly “shutting off,” to being a weary man, questioning his own existence and whether who he is, and how he is, is worth preserving, since the “real Simon” died over a century previously.

The question he is asking, then, is whether it was good or bad what he did? He killed “people” – he ended their awareness, but were they really people? Or copies? Is there a difference? Which is worth saving: you, or a digital copy that You will never be aware of?

What makes life worth living? What makes life worth saving?

What’s It All About, Ambi?

My first job was working with individuals with profound disabilities. They were wonderful and they each still have a special place in my heart. We’re talking quite profound, though: medically complex, not aware of self or place, some in varying levels of constant pain. Almost all of these cases represented extraordinary means that had been taken to save them as infants – things like failure to thrive, organ failure, or organs failing to develop properly were all to blame– and then here they were, adults with gastric tubes, diapers, buckled into wheelchairs, some in pain, needing 24-hour personal and medical care, and, as far as anyone could tell, unaware of their surroundings other than any immediate stimulus they were experiencing.

It really made me – and my coworkers – question what it means to truly “have a life,” rather than just “existing” or “being alive.” We asked ourselves, and sometimes each other, what we thought a life with meaning really was. The hardest question I was ever asked by one of my students was, “If you found out that your baby would be like [name of client], would you go through extraordinary means to save them?”

What they were asking was, “What makes a life worth preserving?” or “When does living become existing?”

It’s this question that the WAU fails to really answer, or at least, it answers by saying “existing.”

The WAU is unable to save the physical, biological humans, and so creates half-robot facsimiles with human consciousness code. But these creatures go insane, because, as Catherine explains, they aren’t able to cope with the plight of their existence. But the WAU doesn’t understand this. The WAU doesn’t know what it means to “have a life,” and so follows an incomplete program, creating incomplete lifeforms that are alive, but not necessarily “having lives” as we might define them.

The ARK project likewise tries to answer this question, except at the helm this time is a human, trying to capture what a human might think is “a life worth living.” In this case, the ARK is the coded memories, personalities, and histories of humans, inserted into computer-generated worlds programmed to their liking, and allowing them an existence reminiscent of what they are familiar with from Earth, while also acknowledging (to the digital people) that they not humans in the physical sense anymore, and this isn’t a physical life anymore.

And the digital people seem to be okay with that.

So what are we, if not our memories? What are our lives, if not our histories, what we’ve done, how we’ve felt, and the things we’ve thought and experienced? It seems even if this type of consciousness is fabricated/experienced by someone else (even a digital someone), it reads as real. It reads as existence. It reads as a life that has been preserved, in some form, anyway.

One last thought to chew on, though. Consciousness, as we define it now, is finite. Everything else is an illusion. So,

When does living become existing? What makes a life A Life? What gives it meaning? Can that be captured digitally and maintain the fidelity we are used to? Let me know in the comments.

Thanks for stopping by, and I’ll see you soon!

~Athena

Do you like what you’ve read? Become a revered Aegis of AmbiGaming and show your support for small creators and for video games as a serious, viable, and relevant medium!

Leave a comment